Raw data

The Video SDK provides you with an option to access real-time raw audio, video, and share data during a session, so you can apply additional effects and enhance the user experience. Use a processor component to apply effects or transformations to each piece of data in real-time.

An example of raw share data is when a user shares their screen.

- Receive and send raw share video data using the same logic as video data, except get the share pipe instead of the video pipe in the raw data pipe method.

- Receive and send raw share audio data using the same method used for raw audio data.

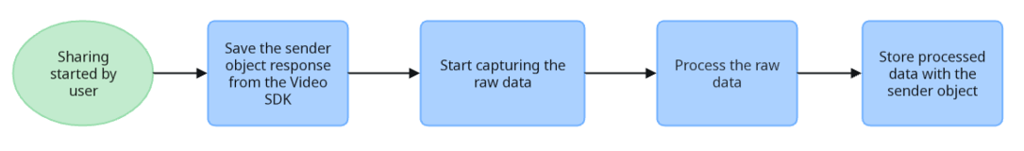

Follow the process outlined in this flowchart to capture and process raw data.

Select raw data memory mode

In order to utilize raw data of any type, you must first select the memory mode you wish to use. The SDK supports heap-based and stack-based memory modes.

Stack-based memory

- Variables are allocated automatically and deallocated after the data leaves scope.

- Variables are not accessible from or transferable to other threads.

- Typically has faster access than heap-based memory allocation.

- Memory space is managed by the CPU and will not become fragmented.

- Variables cannot be resized.

See Stack-based memory allocation for details.

Specify with:

ZoomVideoSDKRawDataMemoryMode.ZoomVideoSDKRawDataMemoryModeStack

Heap-based memory

- Variables are allocated and deallocated manually. You must allocate and free variables to avoid memory leaks.

- Variables can be accessed globally.

- Has relatively slower access than stack-based memory allocation.

- Has no guarantee on the efficiency of memory space and can become fragmented.

- Variables can be resized.

See Heap-based dynamic memory allocation for details.

Specify with:

ZoomVideoSDKRawDataMemoryMode.ZoomVideoSDKRawDataMemoryModeHeap

Specify memory mode

After determining which memory mode is right for you, you must specify it when the SDK is initialized. Note that this must be done for audio. video, and share individually. To specify a raw data memory mode, provide one of these enums cases to the ZoomVideoSDKInitParams during SDK initialization.

val modeHeap = ZoomVideoSDKRawDataMemoryMode.ZoomVideoSDKRawDataMemoryModeHeap

val params = ZoomVideoSDKInitParams().apply {

videoRawDataMemoryMode = modeHeap // Set video memory to heap

audioRawDataMemoryMode = modeHeap // Set audio memory to heap

shareRawDataMemoryMode = modeHeap // Set share memory to heap

}

ZoomVideoSDKRawDataMemoryMode modeHeap = ZoomVideoSDKRawDataMemoryMode.ZoomVideoSDKRawDataMemoryModeHeap;

ZoomVideoSDKInitParams params = new ZoomVideoSDKInitParams();

params.videoRawDataMemoryMode = modeHeap; // Set video memory to heap

params.audioRawDataMemoryMode = modeHeap; // Set audio memory to heap

params.shareRawDataMemoryMode = modeHeap; // Set share memory to heap

Receive raw video data

Raw video data is encoded in the YUV420p format. YUV420 is a data object commonly used by the renderer based on OpenGL ES.

To access and modify the video data, you will need to do the following:

- Implement an instance of the

ZoomVideoSDKRawDataPipeDelegate. - Use callback functions provided by the

ZoomVideoSDKRawDataPipeDelegateto receive each frame of the raw video data. - Pass the delegate into the video pipe of a specific user.

val dataDelegate = object : ZoomVideoSDKRawDataPipeDelegate {

override fun onRawDataStatusChanged(status: ZoomVideoSDKRawDataPipeDelegate.RawDataStatus?) {

}

override fun onRawDataFrameReceived(rawData: ZoomVideoSDKVideoRawData?) {

}

}

val pipe = user.videoPipe

pipe.subscribe(ZoomVideoSDKVideoResolution.VideoResolution_360P, dataDelegate)

```kotlin

val dataDelegate = object : ZoomVideoSDKRawDataPipeDelegate {

override fun onRawDataStatusChanged(status: ZoomVideoSDKRawDataPipeDelegate.RawDataStatus?) {

}

override fun onRawDataFrameReceived(rawData: ZoomVideoSDKVideoRawData?) {

}

}

val pipe = user.videoPipe

pipe.subscribe(ZoomVideoSDKVideoResolution.VideoResolution_360P, dataDelegate)

```

Each frame of video data will be made available through the ZoomVideoSDKVideoRawData object. Various pieces of data can be accessed through this object in onVideoRawDataFrame.

```java

int height = rawData.getStreamHeight();

int width = rawData.getStreamWidth();

ByteBuffer uBuffer = rawData.getuBuffer();

ByteBuffer vBuffer = rawData.getvBuffer();

ByteBuffer yBuffer = rawData.getyBuffer();

```

int height = rawData.getStreamHeight();

int width = rawData.getStreamWidth();

ByteBuffer uBuffer = rawData.getuBuffer();

ByteBuffer vBuffer = rawData.getvBuffer();

ByteBuffer yBuffer = rawData.getyBuffer();

Remove backgrounds with an alpha channel

Session hosts who hold a raw streaming token can enable alpha channel mode, which requests that Zoom's Video SDK send both the original video data and an alpha mask demarking the edge of a user's body. The Video SDK can then use the alpha mask and raw data to remove the user's background and render session users natively in the host's virtual world.

Turn on alpha channel mode

Before turning on the alpha channel, call the following:

canEnableAlphaChannelModeto check whether the alpha channel mode is already turned onisDeviceSupportAlphaChannelModeto check whether the device hardware capabilities are capable of supporting video alpha mode

To turn on, or off, the alpha channel, call enableAlphaChannelMode.

val videoHelper = ZoomVideoSDK.getInstance().getVideoHelper()

if (videoHelper.isAlphaChannelModeEnabled) {

if (videoHelper.canEnableAlphaChannelMode()) {

videoHelper.isDeviceSupportAlphaChannelMode // Determines whether the device hardware capabilities are capable of supporting video alpha mode.

videoHelper.enableAlphaChannelMode(true) // true/false to enable/disable alpha channel

videoHelper.isAlphaChannelModeEnabled

}

}

ZoomVideoSDKVideoHelper videoHelper = ZoomVideoSDK.getInstance().getVideoHelper();

if (videoHelper.isAlphaChannelModeEnabled()) {

if (videoHelper.canEnableAlphaChannelMode()) {

videoHelper.isDeviceSupportAlphaChannelMode();

videoHelper.enableAlphaChannelMode(true);

videoHelper.isAlphaChannelModeEnabled();

}

}

Alpha channel callbacks

The onVideoAlphaChannelStatusChanged callback is triggered when alpha channel mode is turned on or off. After turning on the alpha channel, the raw video data will now have two more values alphaBuffer, for the alpha data buffer, and alphaBufferLen, for the alpha data buffer length, included in the same onRawDataFrameReceived callback.

Now that you've received the data, edit the raw data to suit your use case.

override fun onVideoAlphaChannelStatusChanged(isAlphaModeOn: Boolean) {

}

override fun onRawDataFrameReceived(rawData: ZoomVideoSDKVideoRawData?) {

if (rawData == null) {

return

}

rawData.alphaBuffer

}

@Override

public void onVideoAlphaChannelStatusChanged(boolean isAlphaModeOn) {

}

@Override

public void onRawDataFrameReceived(final ZoomVideoSDKVideoRawData rawData) {

rawData.getAlphaBuffer();

}

Receive raw audio data

Through your implementation of ZoomVideoSDKDelegate, you can access mixed (combined audio output from one or more users in a session), per-user, and shared raw audio data.

Unlike raw video data, raw audio data will default to stack-based memory if you do not specify a memory mode.

- The virtual speaker allows access to audio data received from other users in the session. This data represents what a user would hear played through the device's speakers.

- The virtual mic allows audio data for the current user to be sent to the session programmatically instead of from the SDK capturing it through an audio input device.

Follow these steps to receive raw audio if it was sent through ZoomVideoSDKVirtualAudioMic.

- Create an instance of

ZoomVideoSDKVirtualAudioSpeaker. - Pass that instance into

ZoomVideoSDKSessionContext. - Access raw data in each callback method.

To access raw audio data, you will need to listen for the following callbacks in your listener.

override fun onMixedAudioRawDataReceived(rawData: ZoomVideoSDKAudioRawData?) {

// Access mixed data for the whole session here

}

override fun onOneWayAudioRawDataReceived(rawData: ZoomVideoSDKAudioRawData?, user: ZoomVideoSDKUser) {

// Access user-specific raw data here from the provided user

}

override fun onShareAudioRawDataReceived(rawData: ZoomVideoSDKAudioRawData?) {

// Access share audio raw data here.

}

override fun onMixedAudioRawDataReceived(rawData: ZoomVideoSDKAudioRawData?) {

// Access mixed data for the whole session here

}

override fun onOneWayAudioRawDataReceived(rawData: ZoomVideoSDKAudioRawData?, user: ZoomVideoSDKUser) {

// Access user-specific raw data here from the provided user

}

override fun onShareAudioRawDataReceived(rawData: ZoomVideoSDKAudioRawData?) {

// Access share audio raw data here.

}

@Override

public void onMixedAudioRawDataReceived(ZoomVideoSDKAudioRawData rawData) {

// Access mixed data for the whole session here.

}

From within the callbacks, you can access the data buffer with rawData.buffer property.

Receive raw share data

Receive raw share video and audio data, for example, when someone is sharing their screen, similarly to how you receive raw video and raw audio data.

Receive raw share video data

Follow the same steps and code to receive raw video data, except get the share data instead of the video data, see the following code for an example.

// Previous code same as receive raw video

ZoomVideoSDKRawDataPipe pipe = user.getSharePipe();

pipe.subscribe(ZoomVideoSDKVideoResolution.VideoResolution_360P, dataDelegate);

// Previous code same as receive raw video

ZoomVideoSDKRawDataPipe pipe = user.getSharePipe();

pipe.subscribe(ZoomVideoSDKVideoResolution.VideoResolution_360P, dataDelegate);

Receive raw share audio data

Receive raw share audio data in the same way as you receive raw audio data.

Send raw video data

In addition to being able to receive and process raw video data, you may also send raw as well as processed video data of a user from the user's device. To do this, you must provide an implementation of ZoomVideoSDKVideoSource in your ZoomVideoSDKSessionContext object when joining a session.

The Video SDK for Android supports receiving videos in the resolutions enumerated in the ZoomVideoSDKVideoResolution enum.

val source = object : ZoomVideoSDKVideoSource {

override fun onStopSend() {

}

override fun onPropertyChange(capabilityList: MutableList<ZoomVideoSDKVideoCapability>, capability: ZoomVideoSDKVideoCapability?) {

}

override fun onUninitialized() {

}

override fun onStartSend() {

}

override fun onInitialize(sender: ZoomVideoSDKVideoSender?, capabilityList: MutableList<ZoomVideoSDKVideoCapability>, capability: ZoomVideoSDKVideoCapability?) {

sender?.sendVideoFrame( /* Your raw data buffer goes here -> */ buffer, width, height, frameLength, rotation)

}

}

val params = ZoomVideoSDKSessionContext().apply {

externalVideoSource = source

}

val source = object : ZoomVideoSDKVideoSource {

override fun onStopSend() {

}

override fun onPropertyChange(capabilityList: MutableList<ZoomVideoSDKVideoCapability>, capability: ZoomVideoSDKVideoCapability?) {

}

override fun onUninitialized() {

}

override fun onStartSend() {

}

override fun onInitialize(sender: ZoomVideoSDKVideoSender?, capabilityList: MutableList<ZoomVideoSDKVideoCapability>, capability: ZoomVideoSDKVideoCapability?) {

sender?.sendVideoFrame( /* Your raw data buffer goes here -> */ buffer, width, height, frameLength, rotation)

}

}

In the onInitialize(ZoomVideoSDKVideoSender sender, List<ZoomVideoSDKVideoCapability> list, ZoomVideoSDKVideoCapability ZoomVideoSDKVideoCapability) callback:

- The

List<ZoomVideoSDKVideoCapability> listparameter is the supported capability list. It combines the supported resolution of the session and the device to create a list of supported capabilities. - The

ZoomVideoSDKVideoCapability ZoomVideoSDKVideoCapabilityparameter is the suggested capability. It combines the maximum capability of the session itself and the device to get the real maximum as the suggested capability.

Pre-process raw video data

Pre-process raw video data using onPreProcessRawData within ZoomVideoSDKVideoSourcePreProcessor. Assign an implementation to the preProcessor field of your ZoomVideoSDKSessionContext before joining a session.

val preProcessor = object : ZoomVideoSDKVideoSourcePreProcessor {

override fun onPreProcessRawData(rawData: ZoomVideoSDKPreProcessRawData?) {

// Perform preprocess actions here.

}

}

val params = ZoomVideoSDKSessionContext().apply {

preProcessor = preProcessor

}

ZoomVideoSDKVideoSourcePreProcessor preProcessor = new ZoomVideoSDKVideoSourcePreProcessor() {

@Override

public void onPreProcessRawData(ZoomVideoSDKPreProcessRawData rawData) {

// Perform preprocess actions here.

}

};

ZoomVideoSDKSessionContext params = new ZoomVideoSDKSessionContext();

params.preProcessor = preProcessor;

Send raw audio data

In addition to being able to receive and process raw audio data, you may also send raw or processed audio data of a user from the user's device.

To do this:

- Create an instance of

ZoomVideoSDKVirtualAudioMic. - Pass that instance into

ZoomVideoSDKSessionContext. - Obtain

ZoomVideoSDKAudioRawDataSenderfrom theonMicInitializecallback. - Use the send method to send raw audio data.

val virtualMic = object : ZoomVideoSDKVirtualAudioMic {

override fun onMicInitialize(sender: ZoomVideoSDKAudioRawDataSender?) {

// Store sender for later use

sender?.send(rawData, lengthInBytes, sampleRate)

}

override fun onMicStartSend() {

// You can now send audio

}

override fun onMicStopSend() {

// You can no longer send audio

}

override fun onMicUninitialized() {

// Mic is no longer active

}

}

val virtualSpeaker = object : ZoomVideoSDKVirtualAudioSpeaker {

override fun onVirtualSpeakerMixedAudioReceived(rawData: ZoomVideoSDKAudioRawData?) {

// Access session audio here

}

override fun onVirtualSpeakerOneWayAudioReceived(rawData: ZoomVideoSDKAudioRawData?, user: ZoomVideoSDKUser?) {

// Access user-specific audio here

}

override fun onVirtualSpeakerShareAudioReceived(p0: ZoomVideoSDKAudioRawData?) {

}

}

val params = ZoomVideoSDKSessionContext().apply {

virtualAudioMic = virtualMic

virtualAudioSpeaker = virtualSpeaker

}

val virtualMic = object : ZoomVideoSDKVirtualAudioMic {

override fun onMicInitialize(sender: ZoomVideoSDKAudioRawDataSender?) {

// Store sender for later use

sender?.send(rawData, lengthInBytes, sampleRate)

}

override fun onMicStartSend() {

// You can now send audio

}

override fun onMicStopSend() {

// You can no longer send audio

}

override fun onMicUninitialized() {

// Mic is no longer active

}

}

val virtualSpeaker = object : ZoomVideoSDKVirtualAudioSpeaker {

override fun onVirtualSpeakerMixedAudioReceived(rawData: ZoomVideoSDKAudioRawData?) {

// Access session audio here

}

override fun onVirtualSpeakerOneWayAudioReceived(rawData: ZoomVideoSDKAudioRawData?, user: ZoomVideoSDKUser?) {

// Access user-specific audio here

}

override fun onVirtualSpeakerShareAudioReceived(p0: ZoomVideoSDKAudioRawData?) {

}

}

val params = ZoomVideoSDKSessionContext().apply {

virtualAudioMic = virtualMic

virtualAudioSpeaker = virtualSpeaker

}

Send raw share data

Follow these steps to send raw screen share data.

-

Have your class implement the

ZoomVideoSDKShareSourceinterface. -

Use the

sendShareFramemethod inonShareSendStartedto send raw screen share data.class RawShareDataExample : ZoomVideoSDKShareSource { var shareSender: ZoomVideoSDKShareSender? = null override fun onShareSendStarted(sender: ZoomVideoSDKShareSender?) { shareSender = sender // sendShareFrame sends one frame data, therefore you will most likely need a thread for the full frame shareSender?.sendShareFrame(frameBuffer, width, height, frameLength, format) } override fun onShareSendStopped() { shareSender = null // If you have a thread for onShareSendStarted, you will most likely need to cancel it and set it to null } }class RawShareDataExample : ZoomVideoSDKShareSource { var shareSender: ZoomVideoSDKShareSender? = null override fun onShareSendStarted(sender: ZoomVideoSDKShareSender?) { shareSender = sender // sendShareFrame sends one frame data, therefore you will most likely need a thread for the full frame shareSender?.sendShareFrame(frameBuffer, width, height, frameLength, format) } override fun onShareSendStopped() { shareSender = null // If you have a thread for onShareSendStarted, you will most likely need to cancel it and set it to null } }